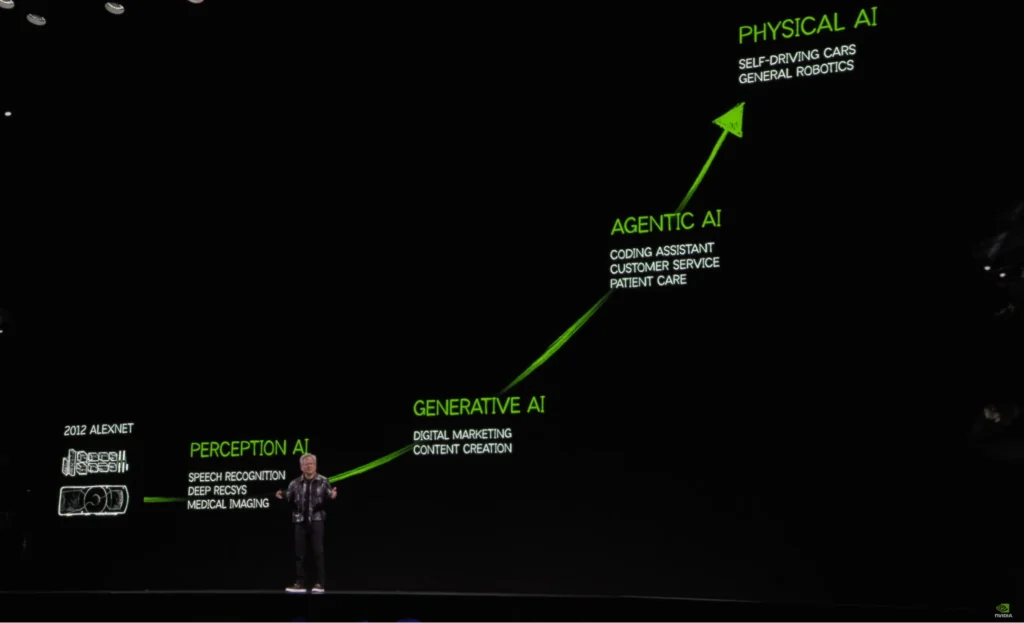

Artificial intelligence is moving beyond software and data, and into systems that interact with the real world.

We’re now seeing AI applied to systems that sense, decide, and act through physical components. This shift is what many are beginning to call Physical AI. The definitions are still forming. But the implications for engineering, and especially for engineering education, are significant. As I often say, “It’s a brand-new domain. There are no experts yet, no defined curriculum.”

At the same time, interest is growing quickly across industry, particularly following NVIDIA’s push around Physical AI. Universities are still figuring out how to respond. There are no standard programs, and no clear path yet for how to train the next generation of engineers in this space.

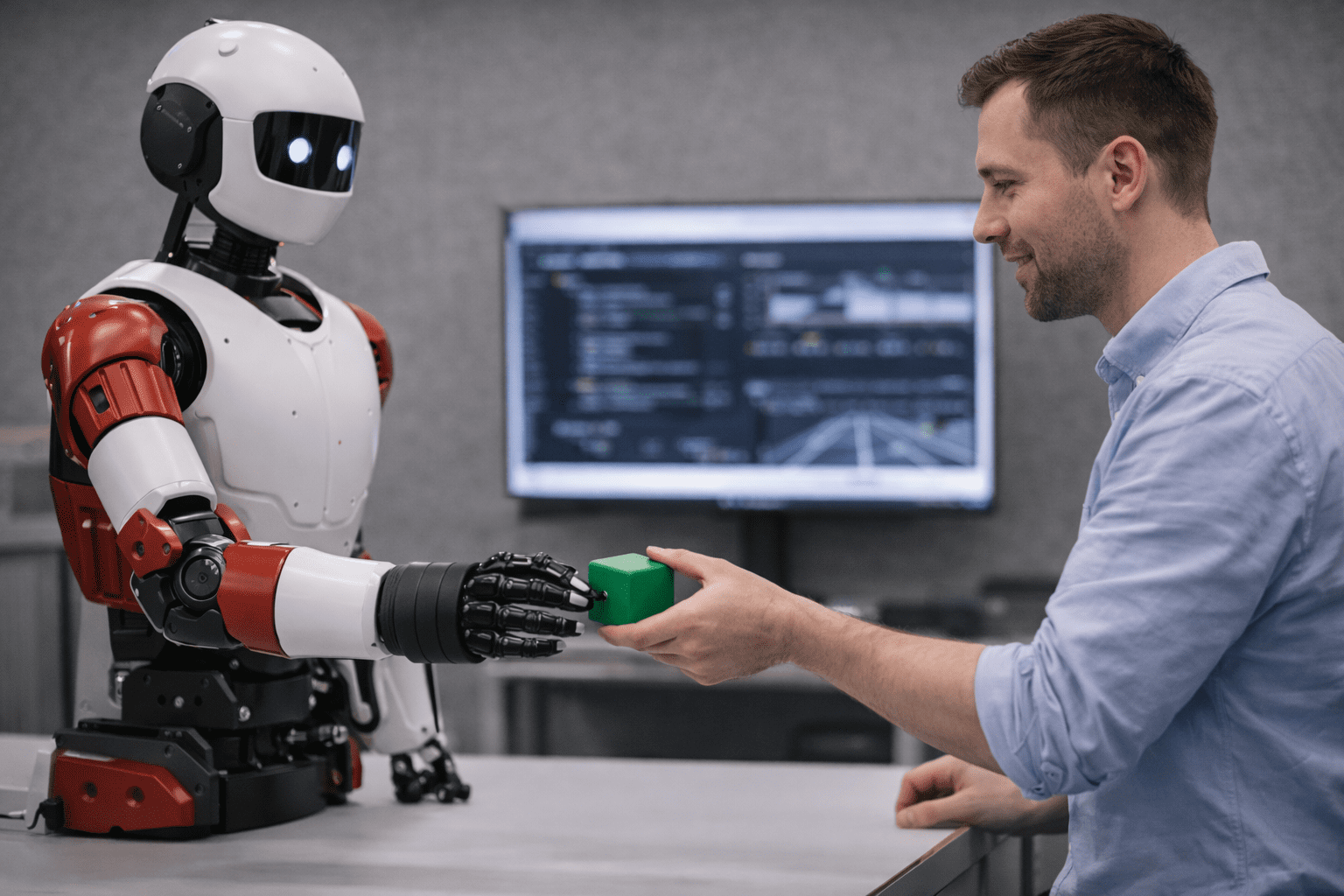

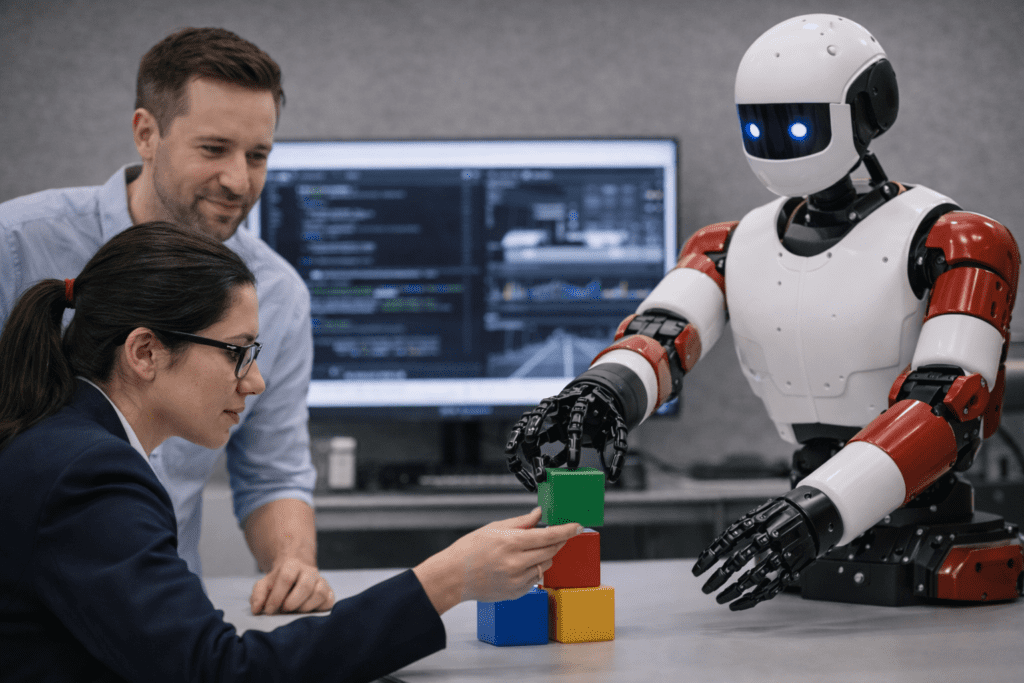

That’s because Physical AI isn’t just another branch of AI. It brings together robotics, control systems, simulation, and machine learning in ways most programs don’t currently support. And it changes what AI systems are expected to do. Instead of just analyzing data, these systems must perceive, decide, and act in real time, while handling uncertainty in the physical world.

We’re already seeing what makes this possible. Earlier this year, humanoid robots performed coordinated acrobatic routines on the Chinese New Year gala on live national TV alongside human performers. These were not pre-programmed sequences, but systems trained to execute complex physical behaviors with consistency in a shared environment. It would have been impossible to achieve even a year ago.

But it also highlights the challenge. Physical AI is not just about model accuracy. It is about building systems that are stable, safe, and reliable in the real world. And this is where the limitations of simulation start to become clear.

Why simulation alone is not enough

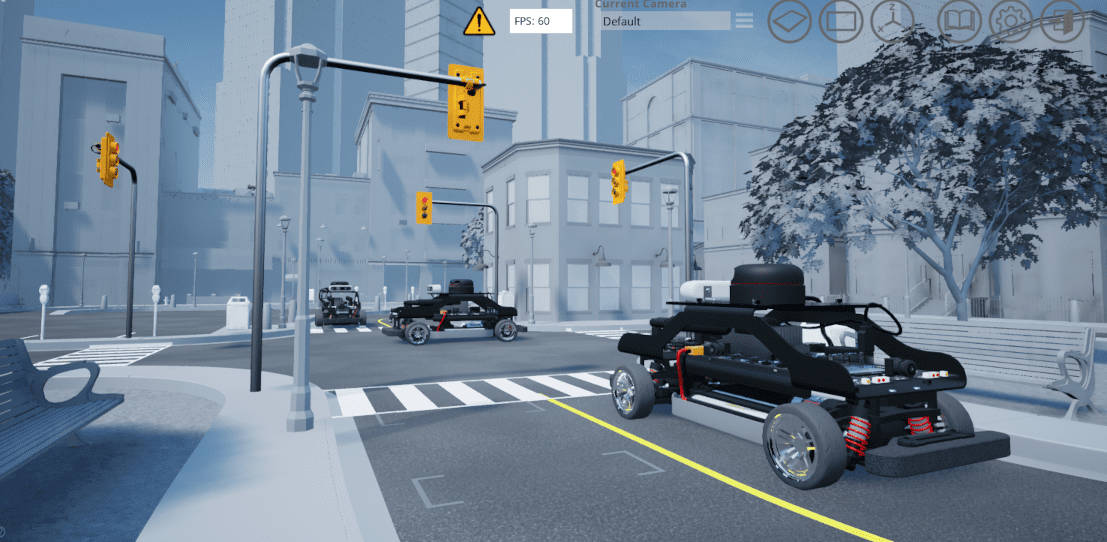

Simulation is and will continue to be a critical part of engineering in the Physical AI era. We simulate before we build. That’s standard practice across every engineering discipline.

But with Physical AI, I see a common assumption that simulation is enough. It isn’t. Even the best simulation environments can’t fully capture how real systems behave. You start to see differences in sensor timing, communication delays, actuator response and system integration.

And those differences matter. This is how I usually describe it:

“It’s not just the physics. It’s the plumbing — how everything actually behaves in a real system.”

That’s where things break down. A system that works in simulation can fail when you deploy it. And that gap, what we call the sim-to-real gap, is one of the biggest challenges in Physical AI today.

Closing that gap requires more than simulation alone. It requires systems to be tested and validated under conditions that reflect how they will actually behave in the real world. Even in cases like the coordinated robotic performances mentioned earlier, achieving that level of consistency depends on extensive validation beyond simulation.

Understanding the Physical AI workflow

To move from simulation to reliable real-world performance, a more structured approach is starting to emerge. Across industry and research, the workflow is converging around a few key stages:

- Simulation: where you model and test initial ideas

- Training: where you collect data and train models, often using cloud infrastructure

- Validation: where you test against environments that reflect real hardware behavior

- Deployment: where you run on physical systems

Each of these stages is important. But in my experience, the biggest issues show up in the transitions between them.

“Most systems don’t fail in simulation, they fail when you try to run them in the real world.”

That’s why validation is becoming such a critical step.

At Quanser, this is an area we’ve focused on for years, building platforms that allow researchers and students to move from theory and simulation into real-time implementation, with systems that behave consistently across both environments.

What this means for academia

For universities, this creates both an opportunity and a challenge. On one hand, Physical AI aligns directly with areas that are already seeing strong growth: robotics, autonomous systems, human-robot interaction, defense and dual-use applications.

There is real momentum, including funding and industry demand. On the other hand, there’s no clear model yet for how to teach it. What I’m seeing is that research is leading the way.

“Curriculum will follow, but research and validation come first.”

That’s how this typically evolves. New capabilities are developed in research environments first. Only later do they become structured courses or degree programs. For institutions, that means the focus right now should be on enabling research, especially in areas that involve real-world system validation.

This is also where lab infrastructure becomes important. It’s not just about having access to simulation equipment, but about having systems that allow students and researchers to test, iterate, and validate their work under realistic conditions.

Preparing engineers for Physical AI

This shift also changes what we expect from engineering graduates.

It’s no longer enough to understand AI in isolation. Engineers need to be comfortable working across control systems, sensors and actuators, real-time system behavior and the full pipeline from simulation to deployment.

And just as importantly, they need hands-on experience.

You can’t fully understand these systems without working with something that behaves like the real world. This is where integrated lab environments, combining simulation, control, and hardware, start to play a more central role in education.

Looking ahead

Physical AI is still developing. The platforms are evolving, the workflows are maturing, and the standards haven’t been set yet.

But the direction is clear. We’re moving toward a world where intelligence is embedded in physical systems, not just software. Building those systems requires more than strong AI models. It requires the ability to design, test, and validate against real world conditions.

For universities, the question is how quickly they adapt to that shift. There is still an opportunity to help define what Physical AI education looks like before it becomes standardized. And the institutions that invest early, particularly in the ability to bridge simulation and real- world implementation, will be the ones best positioned to lead.

We’re already seeing this shift shape how research environments are being designed.

At Quanser, we’ve been working at the intersection of control, simulation and real-time platform for more than 35 years, long before the term “Physical AI” started gaining traction. What’s changed is not the fundamentals, but how these pieces are now coming together under a more unified workflow.

You can see this reflected in how our technology has evolved, from foundational control systems to integrated lab environments supporting autonomy and applied AI. Today, that includes platforms where students and researchers work across the full pipeline, from modeling and simulation through to real time implementation on physical systems.

Later this year, we’ll be introducing a new research robotic platform built with this in mind. It is designed to support the full Physical AI pipeline, from data collection and training through to validation on real systems.

If you’re exploring how to bring Physical AI into your labs or research, this is a conversation worth starting now.

Author Bio:

Paul Karam is Chief Operating Officer and Chief Robotics Officer at Quanser, where he leads the company’s R&D roadmap and business development initiatives. His work spans robotics, control systems, and real-time experimentation platforms used in engineering education and research, from early reinforcement learning applications to the development of research drones and self-driving car labs. His focus is on bringing theory into real-world implementation.