I recently joined Quanser’s Academic Applications team after completing my master’s, and one of my first projects was to create new content for our QArm. Since we had already covered the kinematics of the robot, it was essential to now branch out into its camera and the things users can do with it. With everchanging needs from the Robotics Industry, it is increasingly important for people to work around and share spaces with robots. This prioritizes a robot’s ability to detect and identify objects and people. Cameras and LIDARs are a few of the sensors that enable robots to understand their surroundings.

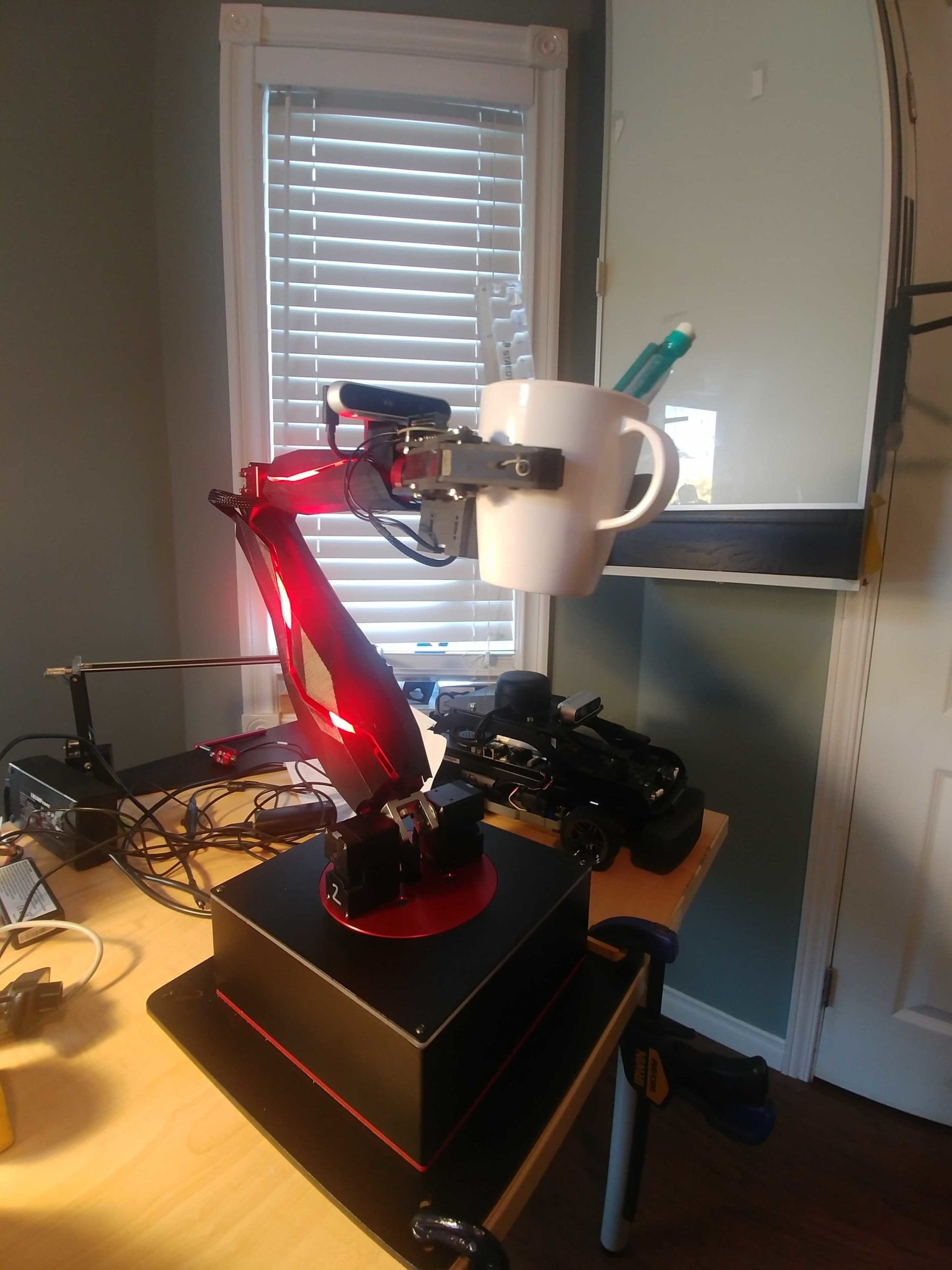

Here at Quanser, most of our robotic platforms are equipped with a camera to aid with perception. For example, our QCar can use it to identify lanes or road signs. The QDrone can detect obstacles, identify fiducial markers for localization, or detect power lines and pipelines. QArm can find target payloads and manipulate objects. The addition of a camera is both vital and an accelerant for autonomous application development.

New Vision Labs

In this blog we will discuss two new labs that will be introduced into the QArm teaching curriculum. These labs are meant to introduce a student to the digital representation of images and get them familiar with using simple image filters. These labs also introduce students to understanding color spaces and identifying and localizing objects in an image, which are the building blocks for advanced perception applications. Keep an eye out on our social media for more information on an upcoming webinar on these labs and computer vision applications across our solutions!

With QUARC, MATLAB and a webcam, you can follow along, the files for the models are in the downloadable folder Imaging Labs.zip (if your camera is not depth sensing, Video 3D Capture blocks will have to be replaced by Video Capture blocks).

Color Spaces Lab

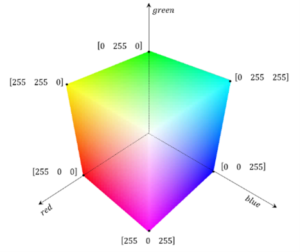

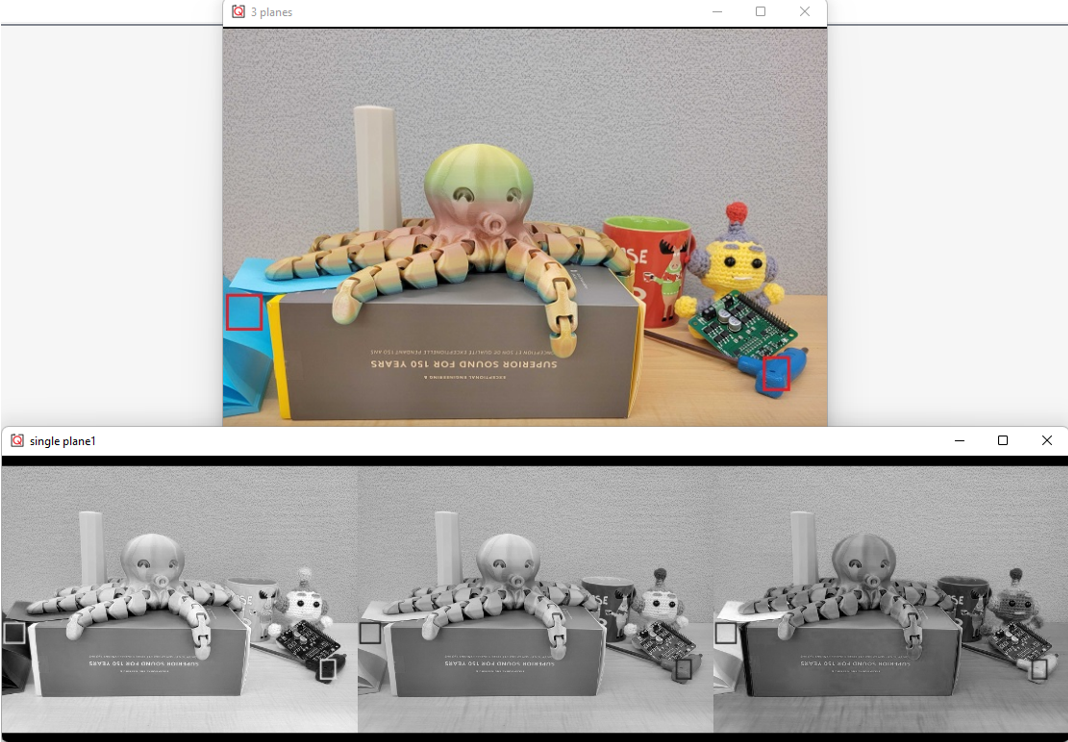

This lab is meant to introduce people that are new to computer vision (or QUARC blocks for vision) to how digital images are formed. This will include understanding and visualizing color images in the RGB space shown in Figure 1a below. In this space, lighter objects in each plane correspond to more of that color, for example, yellow objects will appear brighter in the red and green plane since the mixture of these two colors is what produces yellow digitally (figure 1c). Finally, it analyzes the popular alternate color space, HSV (figure 1b), and its advantages over the RGB space.

Object Detection Lab

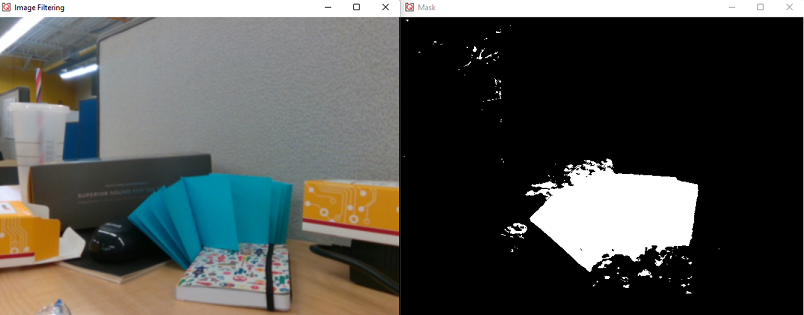

The next lab will build upon previous concepts. Students learn about extracting binary information related to colors from their original image (for example, figure 2a) represented as masks (figure 2b). These masks are images with only two possible values, high or low, based on some logical expression. Note how the mask highlights the parts of the original image that represent cyan. An example use case is shown in Figure 2c, which is a linear combination of the original image and its grayscale version with the mask and its inverse being the pixel-weights.

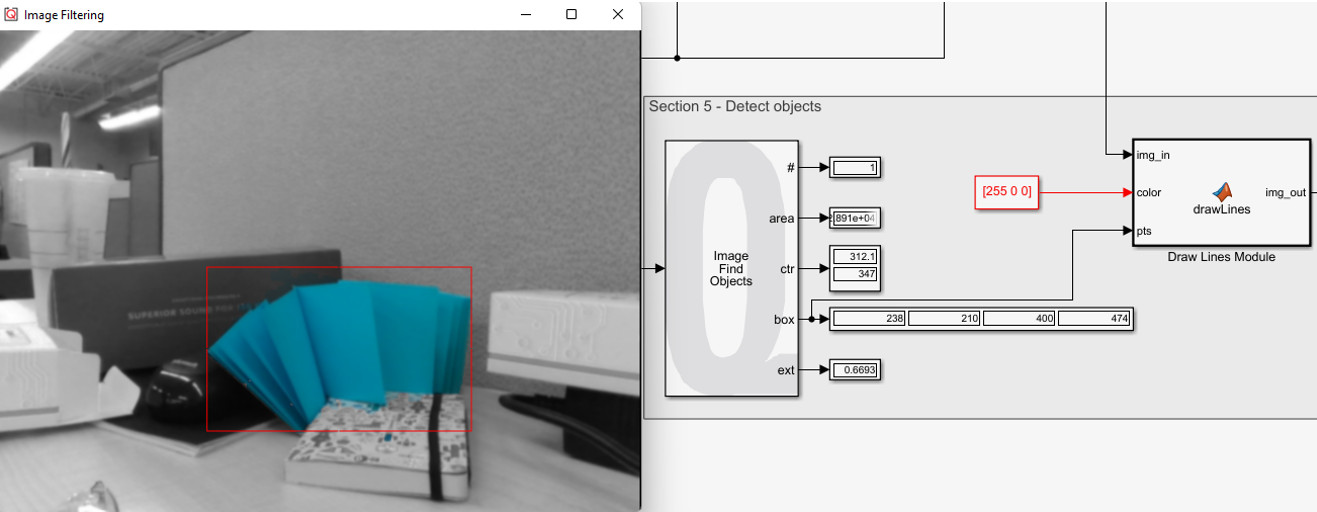

Next, dilation and erosion filters can clean up the mask. This is followed by connected-component labelling, which allows one to identify connected pixels that form an object. This provides useful information such as bounding boxes, centroids, blob area, etc. that can then be used to localize (figure 2d).

Closing Remarks

At the end of these labs, students will highlight objects of a certain color in a live video stream and localize it with a bounding box as shown in the video below, where we are tracking a notebook while we move it.

These labs serve as a gentle introduction to a few fundamental steps in computer vision. The QUARC blocks allow rapid development of high-level algorithms to prototype real life examples. These examples are hardware agnostic. You can use these labs to introduce imaging concepts to anyone starting out with MATLAB and QUARC.

Make sure to look out for our upcoming webinar, where we will be showing video demonstrations of much more!