Self-Driving Car Lab

A Complete Turnkey Ecosystem that Accelerates Research, Enhances Teaching, and Engages Students from Recruitment through Graduation.

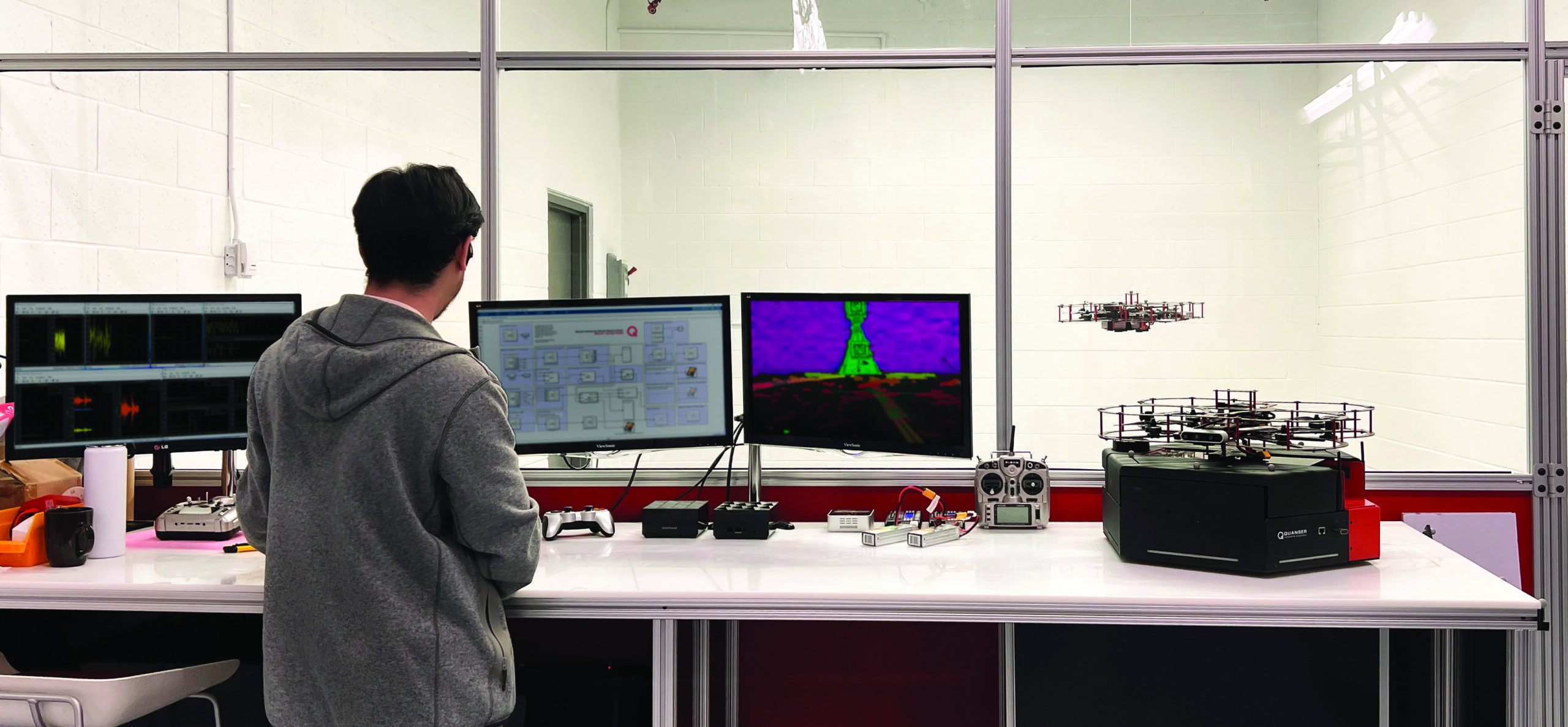

The Self-Driving Car Lab is a unique, ever-growing ecosystem that enables a turnkey experience for teaching, research, outreach, and student competition in autonomous driving. This open-architecture lab comprises a 1/10th-scale, fully instrumented, NVIDIA GPU-powered autonomous car and a range of components that create a realistic and customizable testing environment. Further enhanced by high-fidelity digital twins, comprehensive academic resources, and multi-software support, the lab addresses a wide range of academic needs in self-driving through a single platform.

Product Details

At the center of the Self-Driving Car Lab is QCar 2, a 1/10th-scale autonomous vehicle that brings self-driving concepts into a practical academic setting. With NVIDIA GPU compute, cameras, an IMU, encoder, and LiDAR, it provides a strong foundation for work in perception, localization, mapping, navigation, and end-to-end workflows in AI-based autonomy. Around it, a realistic and customizable test environment with reconfigurable road panels, traffic signs, communication infrastructure, programmable traffic lights, and other accessories supports flexible learning, experimentation, and validation.

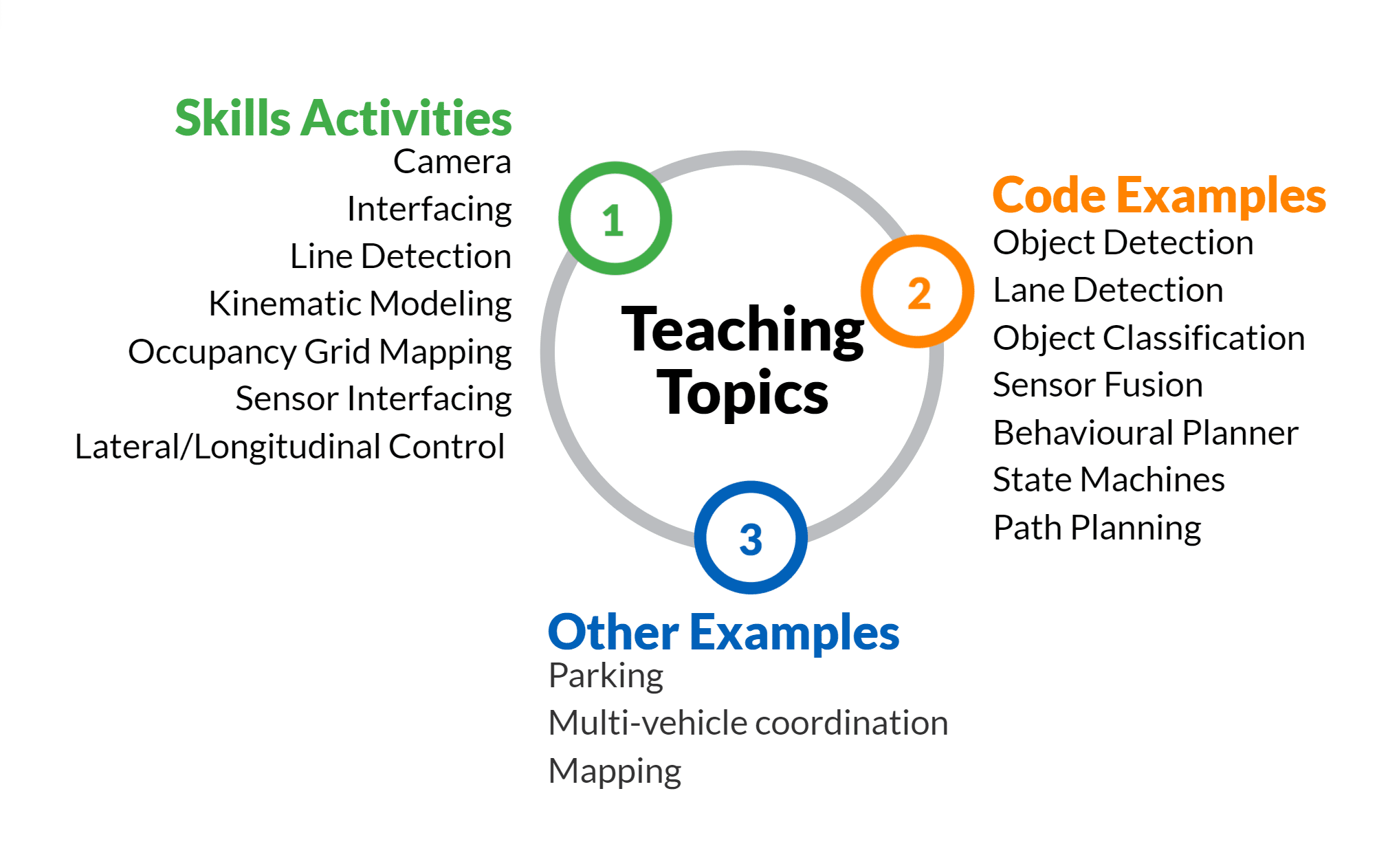

A high-fidelity digital twin module allows users to develop, test, and refine workflows before moving to hardware, while also enabling hybrid, remote, and off-campus learning. Comprehensive academic resources and research examples help connect foundational topics such as camera calibration, sensor fusion, and vehicle dynamics to more advanced work in SLAM, computer vision, reinforcement learning, collaborative autonomy, and multi-agent systems. With compatibility across MATLAB/Simulink, Python, C++, and ROS 2, along with support for 20+ APIs, the Self-Driving Car Lab fits naturally into a wide range of courses, research programs, outreach activities, and student competition projects.

| Turnkey Hardware |

|

| Infrastructure |

|

| Digital Twin Subscription | QLabs Virtual QCar 2 Module |

| Software Licenses |

QUARC™ Complete Lab License

|

| QUARC for Simulink® | |

| Quanser APIs | |

| TensorFlow | |

| Python™ 2.7 / 3 & ROS 2 | |

| CUDA® | |

| cuDNN | |

| TensorRT | |

| OpenCV | |

| Deep Stream SDK | |

| VisionWorks® | |

| VPI™ | |

| GStreamer | |

| Jetson Multimedia APIs | |

| Docker containers with GPU support | |

| Simulink® with Simulink Coder | |

| Simulation and virtual training environments (Gazebo and Quanser Interactive Labs ) | |

| Multi-language development supported with Quanser Stream APIs for inter-process communication | |

| Communication |

Group Citation: Self-driving

Explore more: All Research Paper